Agenta vs diffray

Side-by-side comparison to help you choose the right tool.

Agenta centralizes LLM prompt management and evaluation, enhancing collaboration for reliable AI app development.

Last updated: March 1, 2026

diffray

Diffray's AI agents catch real bugs in code reviews to boost software quality.

Last updated: February 28, 2026

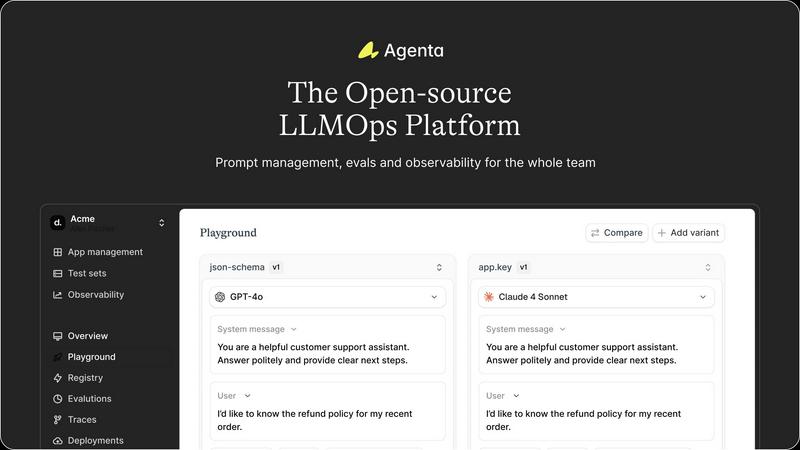

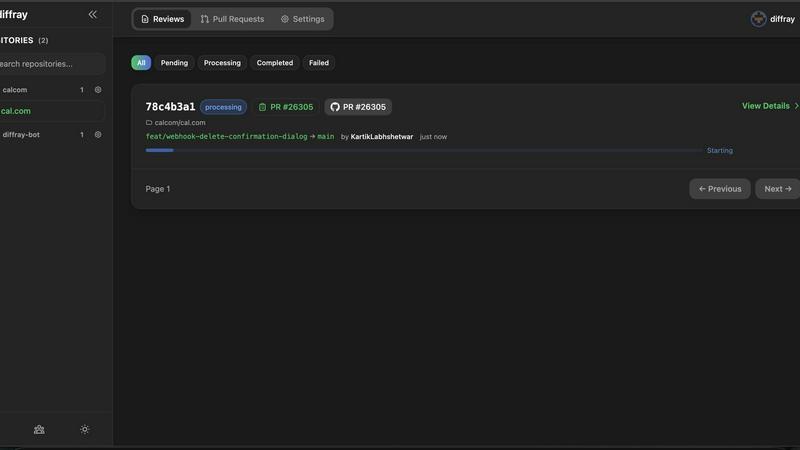

Visual Comparison

Agenta

diffray

Feature Comparison

Agenta

Centralized Prompt Management

Agenta provides a unified platform for storing and managing prompts, evaluations, and traces, eliminating the confusion of scattered resources. This centralization allows teams to easily access and organize their work, ensuring that everyone is on the same page and can collaborate effectively.

Automated Evaluation

The platform features an automated evaluation system that enables teams to systematically run experiments, track results, and validate each change made to their LLM applications. By integrating various evaluators, including LLM-as-a-judge and custom code evaluators, teams can replace guesswork with evidence-based insights.

Comprehensive Observability

Agenta enhances observability by allowing users to trace every request and identify exact failure points within their AI systems. This capability not only aids in debugging but also enables teams to gather user feedback, annotate traces, and turn any trace into a test with a single click, closing the feedback loop efficiently.

Collaborative Workflow

The platform fosters collaboration by bringing together product managers, developers, and domain experts into a single workflow. With a user-friendly interface, domain experts can safely edit and experiment with prompts without needing coding skills. This integration ensures that evaluations and experiments can be conducted seamlessly, enhancing overall team productivity.

diffray

Multi-Agent Specialized Architecture

At the core of diffray is its revolutionary multi-agent system, featuring over 30 AI agents, each trained for a specific review discipline. Instead of one model trying to do everything, you have a dedicated security agent scanning for vulnerabilities like SQL injection, a performance agent identifying inefficient loops or memory leaks, a bug-detection agent catching logical errors, and many more. This specialization ensures deep, contextual analysis that generic tools miss, leading to far more relevant and accurate feedback on every pull request.

Drastically Reduced False Positives

One of the biggest frustrations with automated code review is noise—irrelevant or incorrect suggestions that developers must sift through. diffray's targeted agent system is precision-engineered to minimize this noise. By applying domain-specific rules and context-aware analysis, it filters out irrelevant alerts. This results in an approximately 87% reduction in false positives compared to conventional tools, ensuring that developers can trust the feedback they receive and focus their energy on fixing genuine issues.

Comprehensive Issue Detection

While reducing noise, diffray simultaneously increases signal strength. Its ensemble of specialized agents works in concert to examine code from every critical angle. This comprehensive scrutiny leads to a threefold increase in the detection of real, substantive issues—from subtle security flaws and performance bottlenecks to deviations from best practices and potential bugs that would otherwise reach production. It acts like an entire expert review panel automated into your workflow.

Seamless CI/CD Integration

diffray is built for the modern developer workflow and integrates directly into the tools teams already use. It connects natively with GitHub and GitLab, posting detailed, agent-categorized comments directly onto pull requests. This seamless integration requires no change in developer habit; reviews happen automatically on every PR, providing instant, actionable insights within the existing development environment and continuous integration/continuous delivery (CI/CD) pipeline.

Use Cases

Agenta

Streamlined Prompt Development

Agenta is ideal for teams looking to streamline the prompt development process. By centralizing prompts and facilitating collaboration among team members, it allows for quicker iterations and improvements, leading to more reliable LLM applications.

Enhanced Experimentation

Teams can leverage Agenta's unified playground to experiment with different prompts and models side-by-side. This feature not only simplifies the comparison process but also provides a structured environment for tracking versions and changes, promoting continuous improvement.

Efficient Debugging

When production issues arise, Agenta's observability features allow teams to trace errors back to their source quickly. The ability to annotate traces and convert them into tests enables teams to resolve issues efficiently, minimizing downtime and enhancing system reliability.

Informed Decision-Making

Agenta empowers teams to make data-driven decisions through its automated evaluation and feedback integration. By incorporating human evaluation and expert feedback into the workflow, teams can validate their changes and ensure that their LLM applications meet high standards of performance.

diffray

Accelerating Enterprise Development Cycles

Large development teams working on complex applications face immense pressure to release features quickly without compromising quality. Manual reviews become a major bottleneck. diffray integrates into their enterprise GitHub/GitLab setup, providing instant, expert-level preliminary reviews on every PR. This slashes the initial review time, allowing senior engineers to focus on architectural feedback rather than hunting for basic bugs, thereby accelerating overall development velocity and maintaining high code standards at scale.

Enhancing Security Posture for Startups

Startups and small teams often lack dedicated security expertise, making their code vulnerable to attacks. diffray acts as an always-available security expert. Its specialized security agent automatically scans every pull request for common and advanced vulnerabilities (e.g., XSS, insecure dependencies, hard-coded secrets). This proactive catch prevents security debt from accumulating and helps startups build securely from the ground up, which is crucial for trust and compliance.

Maintaining Code Quality in Fast-Growing Teams

As teams scale and onboard new developers, maintaining consistent code quality and adherence to best practices becomes challenging. diffray enforces code standards automatically. Its best-practice and style-guide agents review every PR for consistency, readability, and adherence to team conventions, acting as a tireless mentor for new hires and a consistency check for everyone. This ensures the codebase remains clean, maintainable, and scalable as the team grows.

Reducing Bug Escape to Production

Even with thorough testing, subtle logical bugs and edge cases can escape into production, causing outages and user dissatisfaction. diffray’s bug-detection and logic-analysis agents scrutinize code changes for these hard-to-find issues—like race conditions, null pointer exceptions, or incorrect boundary conditions. By catching them at the PR stage, it significantly reduces bug escape rates, leading to more stable releases and less firefighting for the engineering team.

Overview

About Agenta

Agenta is an innovative open-source LLMOps platform designed to optimize the development and deployment of reliable large language model (LLM) applications. It serves as a collaborative hub for AI teams, including developers, product managers, and subject matter experts, enabling them to work in unison and overcome the common pitfalls of LLM development. By centralizing prompt management, evaluation, and observability, Agenta addresses issues like disorganized prompts, isolated communication, and inadequate validation processes. The platform empowers teams to experiment with prompts, track version histories, and effectively debug production issues. By streamlining workflows and facilitating collaboration, Agenta enhances organizational productivity and ensures the reliability of LLM applications, ultimately resulting in improved performance and a faster time-to-market.

About diffray

In the fast-paced world of software development, code reviews are a critical bottleneck. Teams struggle with lengthy review cycles, generic feedback that misses critical issues, and an overwhelming number of false positives that waste developer time and erode trust in automated tools. This inefficiency slows down releases and risks letting bugs, security flaws, and performance issues slip into production. diffray is engineered to solve this exact problem. It is an advanced, AI-powered code review assistant that transforms pull request (PR) analysis from a tedious, error-prone task into a swift, precise, and deeply insightful process. Unlike tools that use a single, generalized AI model, diffray employs a sophisticated multi-agent architecture with over 30 specialized AI agents. Each agent is an expert in a specific domain—such as security vulnerabilities, performance anti-patterns, common bugs, language-specific best practices, and even SEO for web code. This targeted approach allows diffray to conduct a contextual, multi-faceted analysis of every code change, dramatically improving accuracy. The result is a proven 87% reduction in false positives and a 3x increase in detecting real, actionable issues. Designed for development teams of all sizes, diffray integrates seamlessly into existing GitHub and GitLab workflows, empowering teams to ship higher-quality code faster by cutting average weekly PR review time from 45 minutes to just 12 minutes per developer.

Frequently Asked Questions

Agenta FAQ

What makes Agenta different from other LLMOps platforms?

Agenta stands out due to its open-source nature, which fosters community collaboration, and its comprehensive feature set that centralizes prompt management, evaluation, and observability, enabling efficient workflows for AI teams.

Can Agenta integrate with existing tools and frameworks?

Yes, Agenta is designed to seamlessly integrate with popular frameworks and models, including LangChain, LlamaIndex, and OpenAI, ensuring that teams can leverage their existing tech stack without vendor lock-in.

How does Agenta support collaboration among team members?

Agenta provides a collaborative environment where product managers, developers, and domain experts can work together on prompt development, evaluations, and debugging, all within a user-friendly interface that encourages participation from all team members.

Is there a community for Agenta users to share ideas and ask questions?

Absolutely! Agenta has an active community on Slack where users can connect, share ideas, seek support, and contribute to the platform's development, fostering a collaborative spirit among AI builders.

diffray FAQ

How does diffray's multi-agent system differ from a single AI model?

A single AI model is a generalist; it has broad knowledge but lacks deep expertise in any specific area, often leading to generic or incorrect suggestions. diffray's multi-agent system is like having a team of specialists. Each of the 30+ agents is finely tuned for a specific domain (security, performance, etc.). They work together to provide a layered, context-rich analysis that is far more accurate and comprehensive, which is why we see drastically fewer false positives and many more real issues found.

What platforms and version control systems does diffray integrate with?

diffray is designed for seamless integration into modern development workflows. It currently offers direct, native integrations with GitHub and GitLab, the two most popular version control and collaboration platforms. Once installed, it automatically analyzes pull requests and merge requests, posting comments directly in the interface developers use every day, with no need for context-switching to a separate dashboard.

How does diffray achieve an 87% reduction in false positives?

This reduction is a direct result of our specialized agent architecture. Each agent uses domain-specific rules, patterns, and contextual understanding to evaluate code. For example, the security agent knows the difference between a real vulnerability and a benign code pattern that looks similar. This precision allows agents to filter out the "noise" that generic tools flag, ensuring that the vast majority of alerts raised are legitimate and actionable for the developer.

Is diffray suitable for small development teams or solo developers?

Absolutely. While diffray delivers tremendous value at scale for large teams, it is equally powerful for small teams and solo developers. It acts as an always-available peer reviewer, catching issues that a single pair of eyes might miss. For small teams, it enforces quality and security standards from the start, preventing technical debt and helping them build robust products efficiently without the need for a large, senior-led review process.

Alternatives

Agenta Alternatives

Agenta is an open-source LLMOps platform that centralizes prompt management and evaluation, specifically designed for building and deploying reliable large language model applications. Users often seek alternatives to Agenta due to various factors, including pricing, specific features that may better suit their needs, integration with existing workflows, or preferences for platform usability. When exploring alternatives, it is essential to consider factors such as ease of use, the robustness of features, the level of community support, and how well the platform aligns with your team's unique requirements.

diffray Alternatives

diffray is an AI-powered code review tool in the development category, designed to streamline the pull request process. It uses a multi-agent system to catch real bugs and enforce best practices with minimal false positives, aiming to significantly cut down review time and improve code quality. Users often explore alternatives for various reasons. These can include budget constraints, the need for integration with specific platforms or CI/CD pipelines, or a requirement for different feature sets like support for particular programming languages or frameworks. The search for the right tool is highly individual to a team's workflow and technical stack. When evaluating alternatives, key considerations should be the accuracy of feedback and the reduction of noise, the tool's understanding of your codebase context, and the overall impact on developer velocity. The goal is to find a solution that integrates seamlessly, provides actionable insights, and ultimately makes the review process more efficient without sacrificing depth.