Agenta vs Fallom

Side-by-side comparison to help you choose the right tool.

Agenta centralizes LLM prompt management and evaluation, enhancing collaboration for reliable AI app development.

Last updated: March 1, 2026

Fallom delivers real-time observability for AI agents, ensuring precise tracking, debugging, and cost management.

Last updated: February 28, 2026

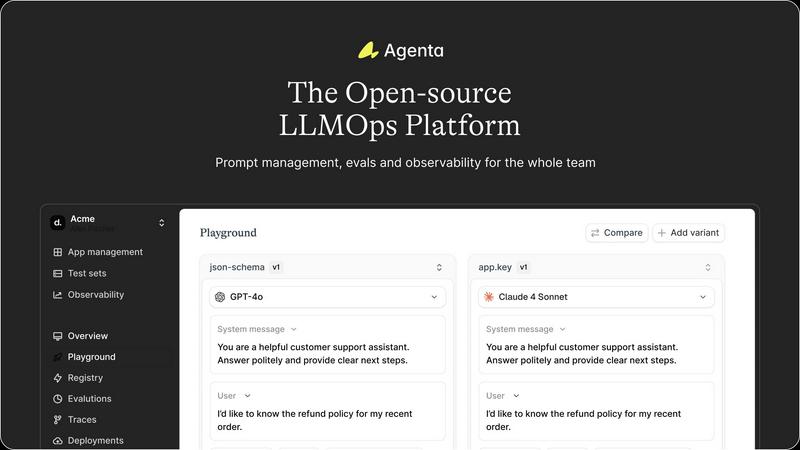

Visual Comparison

Agenta

Fallom

Feature Comparison

Agenta

Centralized Prompt Management

Agenta provides a unified platform for storing and managing prompts, evaluations, and traces, eliminating the confusion of scattered resources. This centralization allows teams to easily access and organize their work, ensuring that everyone is on the same page and can collaborate effectively.

Automated Evaluation

The platform features an automated evaluation system that enables teams to systematically run experiments, track results, and validate each change made to their LLM applications. By integrating various evaluators, including LLM-as-a-judge and custom code evaluators, teams can replace guesswork with evidence-based insights.

Comprehensive Observability

Agenta enhances observability by allowing users to trace every request and identify exact failure points within their AI systems. This capability not only aids in debugging but also enables teams to gather user feedback, annotate traces, and turn any trace into a test with a single click, closing the feedback loop efficiently.

Collaborative Workflow

The platform fosters collaboration by bringing together product managers, developers, and domain experts into a single workflow. With a user-friendly interface, domain experts can safely edit and experiment with prompts without needing coding skills. This integration ensures that evaluations and experiments can be conducted seamlessly, enhancing overall team productivity.

Fallom

Comprehensive LLM Call Tracing

Fallom offers real-time observability for AI agents by enabling teams to track and analyze every LLM call. This feature allows users to debug confidently and understand the timing and costs associated with each call, enhancing overall operational efficiency.

Cost Attribution and Transparency

With Fallom, organizations can effectively track their spending across different models, users, and teams. This feature delivers full cost transparency, making budgeting and chargeback processes seamless and accurate.

Enterprise-Grade Compliance

Fallom is equipped with compliance-ready capabilities that provide complete audit trails to support regulatory requirements. Features include input/output logging, model versioning, and user consent tracking, ensuring that organizations meet standards such as GDPR and the EU AI Act.

Real-time Monitoring and Session Tracking

The platform enables live monitoring of LLM usage, allowing teams to spot anomalies before they escalate into serious incidents. Additionally, session tracking groups traces by user or customer, providing complete context for performance analysis.

Use Cases

Agenta

Streamlined Prompt Development

Agenta is ideal for teams looking to streamline the prompt development process. By centralizing prompts and facilitating collaboration among team members, it allows for quicker iterations and improvements, leading to more reliable LLM applications.

Enhanced Experimentation

Teams can leverage Agenta's unified playground to experiment with different prompts and models side-by-side. This feature not only simplifies the comparison process but also provides a structured environment for tracking versions and changes, promoting continuous improvement.

Efficient Debugging

When production issues arise, Agenta's observability features allow teams to trace errors back to their source quickly. The ability to annotate traces and convert them into tests enables teams to resolve issues efficiently, minimizing downtime and enhancing system reliability.

Informed Decision-Making

Agenta empowers teams to make data-driven decisions through its automated evaluation and feedback integration. By incorporating human evaluation and expert feedback into the workflow, teams can validate their changes and ensure that their LLM applications meet high standards of performance.

Fallom

Optimizing AI Workflows

Organizations can utilize Fallom to optimize their AI workflows by analyzing LLM call data, identifying bottlenecks, and improving response times. This leads to enhanced efficiency in operations involving AI agents.

Ensuring Compliance in AI Deployments

Fallom's robust compliance features make it ideal for organizations operating in regulated industries. Businesses can maintain compliance with data protection regulations while ensuring that their AI systems are transparent and accountable.

Cost Management in AI Operations

Companies can leverage Fallom to gain insights into their LLM usage costs. By tracking expenses on a per-model and per-user basis, organizations can make informed budgeting decisions and manage their AI investments effectively.

Debugging and Performance Enhancement

Fallom's real-time monitoring capabilities allow teams to debug issues quickly and enhance the performance of their AI agents. By identifying latency problems and performance regressions, organizations can ensure a smoother user experience.

Overview

About Agenta

Agenta is an innovative open-source LLMOps platform designed to optimize the development and deployment of reliable large language model (LLM) applications. It serves as a collaborative hub for AI teams, including developers, product managers, and subject matter experts, enabling them to work in unison and overcome the common pitfalls of LLM development. By centralizing prompt management, evaluation, and observability, Agenta addresses issues like disorganized prompts, isolated communication, and inadequate validation processes. The platform empowers teams to experiment with prompts, track version histories, and effectively debug production issues. By streamlining workflows and facilitating collaboration, Agenta enhances organizational productivity and ensures the reliability of LLM applications, ultimately resulting in improved performance and a faster time-to-market.

About Fallom

Fallom is an innovative AI-native observability platform developed specifically for large language model (LLM) and agent workloads. It empowers organizations by providing unprecedented visibility into LLM operations, allowing users to track every LLM call in production. This visibility is achieved through comprehensive end-to-end tracing, which captures essential data points, including prompts, outputs, tool calls, tokens, latency, and per-call costs. The platform is designed for businesses that leverage AI agents, enabling them to effectively monitor and optimize their LLM usage. Fallom's deep insights into user and session-level contexts help teams understand performance metrics and usage patterns. Additionally, it meets enterprise compliance needs with features such as robust logging, model versioning, and consent tracking. With a single OpenTelemetry-native SDK, teams can instrument their applications in just minutes, facilitating live monitoring, rapid debugging, and effective cost attribution across various models, users, and teams.

Frequently Asked Questions

Agenta FAQ

What makes Agenta different from other LLMOps platforms?

Agenta stands out due to its open-source nature, which fosters community collaboration, and its comprehensive feature set that centralizes prompt management, evaluation, and observability, enabling efficient workflows for AI teams.

Can Agenta integrate with existing tools and frameworks?

Yes, Agenta is designed to seamlessly integrate with popular frameworks and models, including LangChain, LlamaIndex, and OpenAI, ensuring that teams can leverage their existing tech stack without vendor lock-in.

How does Agenta support collaboration among team members?

Agenta provides a collaborative environment where product managers, developers, and domain experts can work together on prompt development, evaluations, and debugging, all within a user-friendly interface that encourages participation from all team members.

Is there a community for Agenta users to share ideas and ask questions?

Absolutely! Agenta has an active community on Slack where users can connect, share ideas, seek support, and contribute to the platform's development, fostering a collaborative spirit among AI builders.

Fallom FAQ

What industries can benefit from using Fallom?

Fallom is tailored for organizations that rely on AI agents across various industries, including finance, healthcare, retail, and technology, enabling them to optimize their AI operations and ensure compliance.

How quickly can I integrate Fallom into my existing systems?

With Fallom's OpenTelemetry-native SDK, teams can set up and instrument their applications in under five minutes, allowing for rapid integration and immediate start of live monitoring.

What compliance standards does Fallom support?

Fallom is designed to meet various compliance standards, including GDPR, the EU AI Act, and SOC 2, providing organizations with the necessary tools to maintain regulatory compliance in their AI operations.

Can Fallom help with debugging AI models?

Yes, Fallom provides features that allow teams to debug their AI models efficiently. With real-time monitoring and session tracking, users can quickly identify latency issues and performance regressions, leading to improved model performance.

Alternatives

Agenta Alternatives

Agenta is an open-source LLMOps platform that centralizes prompt management and evaluation, specifically designed for building and deploying reliable large language model applications. Users often seek alternatives to Agenta due to various factors, including pricing, specific features that may better suit their needs, integration with existing workflows, or preferences for platform usability. When exploring alternatives, it is essential to consider factors such as ease of use, the robustness of features, the level of community support, and how well the platform aligns with your team's unique requirements.

Fallom Alternatives

Fallom is an AI-native observability platform that specializes in providing real-time tracking and insights for large language model (LLM) and agent workloads. By enabling organizations to monitor every aspect of their LLM interactions, Fallom ensures precise debugging, cost management, and compliance with regulatory standards. Given the rapid evolution of AI technologies, users often seek alternatives to Fallom for various reasons, including pricing structures, specific feature sets, or integration capabilities that better fit their unique platform needs. When searching for an alternative to Fallom, it is crucial to consider the platform's observability capabilities, ease of integration with existing systems, and the breadth of analytics provided. Additionally, organizations should evaluate how well potential alternatives can support compliance requirements and facilitate cost tracking, ensuring that they can maintain operational efficiency while managing their AI expenditures effectively.