Lovalingo vs OpenMark AI

Side-by-side comparison to help you choose the right tool.

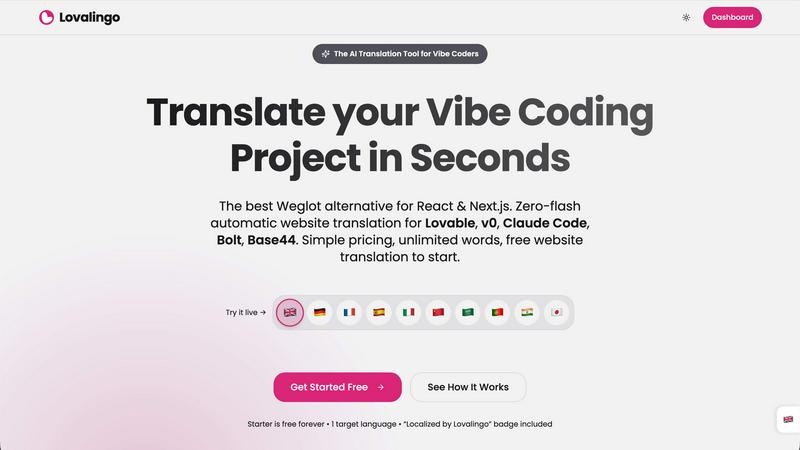

Lovalingo

Translate and index your React apps in seconds with seamless, zero-flash localization and automated SEO features.

Last updated: February 27, 2026

OpenMark AI benchmarks over 100 LLMs on your specific task to find the best model for cost, speed, and quality.

Last updated: March 26, 2026

Visual Comparison

Lovalingo

OpenMark AI

Feature Comparison

Lovalingo

Native SEO

Lovalingo automatically generates multilingual sitemaps, hreflang tags, and meta descriptions, ensuring that your site is indexed globally from day one. This feature eliminates the manual effort typically required to optimize for search engines in different languages.

Zero-Flash UI

Unlike other translation solutions like Weglot, which manipulate the DOM post-load, Lovalingo performs translations during the React rendering process. This results in a user interface that is free from flickering and layout shifts, enhancing the overall user experience.

Vibe-Coding Ready

Lovalingo is fully compatible with popular Vibe coding tools such as Lovable, Bolt, and v0. By simply adding one script, developers can instantly scale their applications to any language, making it easier to manage multilingual content.

Zero Maintenance

With Lovalingo, there are no JSON files to maintain or manage. The tool automatically detects routes and updates content in real-time, ensuring that your application always has the most current translations without any manual intervention.

OpenMark AI

Plain Language Task Description

You don't need to be a prompt engineering expert to start benchmarking. OpenMark AI allows you to describe the task you want to test in simple, natural language. The platform then configures the benchmark based on your description, making advanced LLM evaluation accessible to developers, product managers, and teams without deep technical expertise in model fine-tuning or complex setup procedures.

Multi-Model Comparison in One Session

Instead of manually testing models one by one across different platforms, OpenMark AI lets you run your identical prompt against dozens of models simultaneously. This side-by-side testing environment provides an immediate, apples-to-apples comparison, saving hours of manual work and providing clear, actionable insights into which model performs best for your specific use case.

Real Cost & Performance Metrics

The platform goes beyond simple accuracy scores. It executes real API calls to each model and reports back the actual cost per request, latency, and a scored quality metric based on your task. This gives you a complete picture of the trade-offs between speed, expense, and effectiveness, allowing for true cost-efficiency calculations before you commit to an API.

Stability and Variance Analysis

A single, lucky output from a model is misleading. OpenMark AI runs your task multiple times for each model to measure consistency. The results show variance across these repeat runs, highlighting which models produce stable, reliable outputs and which ones are unpredictable. This is crucial for deploying production features that users can depend on.

Use Cases

Lovalingo

SaaS Founders Scaling Internationally

SaaS founders seeking to expand their offerings into global markets can leverage Lovalingo to quickly and efficiently translate their applications. By automating the translation process, they can focus on growth strategies rather than getting bogged down in manual localization tasks.

Agencies Building on Lovable

Agencies that develop applications on platforms like Lovable can benefit from Lovalingo's seamless integration. This allows them to provide their clients with multilingual websites without the complexity of traditional translation methods, ensuring faster project delivery.

Developers Who Dislike Manual i18n

For developers frustrated with the tedious nature of managing manual i18n processes, Lovalingo offers a welcome solution. The tool's automatic translation capabilities mean that developers can spend less time worrying about language support and more time coding.

Businesses Seeking Seamless User Experiences

Businesses that want to provide a smooth user experience for diverse audiences can utilize Lovalingo to ensure their applications are accessible in multiple languages. The zero-flash UI and native SEO features enhance user engagement and improve global reach.

OpenMark AI

Pre-Deployment Model Selection

Before integrating an LLM into a new chatbot, content generation feature, or data processing pipeline, teams can use OpenMark AI to validate which model from the vast available catalog best fits their workflow. This ensures the chosen model aligns with required quality, cost constraints, and performance benchmarks, reducing the risk of post-launch failures or budget overruns.

Cost Optimization for Existing Features

For teams already using an LLM API, OpenMark AI serves as a tool for periodic cost-performance reviews. By benchmarking their current task against newer or alternative models, they can identify if a different provider offers comparable quality at a lower cost or better performance for the same budget, leading to significant long-term savings.

Evaluating Model Consistency for Critical Tasks

When building applications where output reliability is non-negotiable—such as legal document analysis, medical information extraction, or financial summarization—testing for consistency is key. OpenMark AI's variance analysis helps teams disqualify models with high output fluctuation and select those that deliver dependable results every time.

Prototyping and Research for AI Products

Researchers and product innovators exploring new AI capabilities can use OpenMark AI to rapidly prototype ideas. By quickly testing how different models handle a novel task like complex agent routing or multimodal analysis, they can gather data on feasibility and performance without investing in extensive infrastructure or API integrations upfront.

Overview

About Lovalingo

Lovalingo is a revolutionary AI translation tool designed specifically for developers who work with Vibe coding frameworks like React and Next.js. It addresses the common challenges associated with traditional internationalization (i18n), such as manual JSON string management, broken layouts, and SEO complications. In an era where global growth is essential, Lovalingo automates the translation process, allowing developers to focus on building and scaling their applications without the burden of manual translations. With its render-native translation capabilities, Lovalingo eliminates the need for DOM manipulation, ensuring a seamless user experience. This tool is ideal for SaaS founders aiming to expand into international markets, agencies looking for efficient solutions, and developers who prefer a hassle-free approach to i18n. Lovalingo’s main value proposition lies in its ability to deploy multilingual websites with zero maintenance, ensuring that your content reaches a global audience without compromising performance or SEO.

About OpenMark AI

Choosing the right large language model (LLM) for your AI feature is a high-stakes gamble. Relying on marketing benchmarks or testing one model at a time leaves you guessing about real-world performance, true cost, and output consistency. This uncertainty leads to shipping features that are either too expensive, unreliable, or underperform. OpenMark AI solves this critical pre-deployment challenge. It is a hosted web application designed for developers and product teams to perform task-level LLM benchmarking. You simply describe your specific task in plain language—be it data extraction, translation, or agent routing—and run the same prompts against a vast catalog of over 100 models in a single session. The platform provides side-by-side comparisons using real API calls, not cached data, measuring scored quality, cost per request, latency, and critically, stability across repeat runs to show variance. This means you see which model consistently delivers quality for your unique need at a sustainable cost, eliminating guesswork. With a hosted credit system, you bypass the hassle of configuring multiple API keys, making professional-grade benchmarking accessible without setup. OpenMark AI is built for those who care about cost efficiency (quality relative to price) and consistency, ensuring you deploy with confidence.

Frequently Asked Questions

Lovalingo FAQ

How does Lovalingo improve SEO for multilingual sites?

Lovalingo enhances SEO by automatically generating multilingual sitemaps, hreflang tags, and meta descriptions, ensuring that your site is indexed effectively in various languages from day one.

Is Lovalingo suitable for all types of web applications?

Yes, Lovalingo is designed specifically for React and Next.js applications, making it an excellent choice for developers using these frameworks to build web applications.

How quickly can I set up Lovalingo?

Setting up Lovalingo is incredibly fast. With just one prompt to paste into your AI coding tool, you can be live in over 20 languages within 60 seconds.

What kind of support does Lovalingo offer for developers?

Lovalingo provides extensive documentation and an easy-to-use interface, allowing developers to integrate and manage translations seamlessly without the need for constant support.

OpenMark AI FAQ

How does OpenMark AI differ from standard model leaderboards?

Standard leaderboards often use generic, one-size-fits-all benchmarks (like MMLU or HellaSwag) that may not reflect your specific task. They also typically show "best-case" or cached results. OpenMark AI requires you to describe your actual task, runs fresh API calls against models in real-time, and measures metrics critical for deployment: your task's quality score, actual API cost, latency, and consistency across multiple runs.

Do I need my own API keys to use OpenMark AI?

No, one of the core conveniences of OpenMark AI is that it operates on a hosted credit system. You purchase credits through OpenMark and the platform manages the API calls to providers like OpenAI, Anthropic, and Google on your behalf. This eliminates the need to sign up for, configure, and manage multiple API keys just to run a comparison.

What kind of tasks can I benchmark with OpenMark AI?

You can benchmark virtually any task you would use an LLM for. The platform is designed for task-level evaluation, including but not limited to text classification, translation, data extraction from documents, question answering, content generation, code explanation, sentiment analysis, and testing components of Retrieval-Augmented Generation (RAG) or agentic workflows.

How does OpenMark AI measure the "quality" of a model's output?

Quality scoring is based on the specific task you define. The platform uses automated evaluation methods tailored to your benchmark's goal. This could involve checking for correctness against a defined answer, using a more powerful LLM as a judge to grade responses, or employing other metrics like semantic similarity. The method is configured to align with your success criteria.

Alternatives

Lovalingo Alternatives

Lovalingo is a cutting-edge translation tool specifically designed for React applications, focusing on automated, render-native translation that eliminates the hassle of traditional internationalization (i18n) methods. It streamlines the translation process by automatically generating multilingual sitemaps and meta descriptions, ensuring that developers can scale their applications to global markets without the common pitfalls of manual translations. Users often seek alternatives to Lovalingo due to varying needs such as pricing concerns, specific feature requirements, or compatibility with different platforms. When choosing an alternative, it's crucial to consider factors like ease of integration, maintenance requirements, and the ability of the tool to enhance SEO effectiveness while ensuring a seamless user experience.

OpenMark AI Alternatives

OpenMark AI is a developer tool for task-level benchmarking of large language models. It helps teams compare cost, speed, quality, and stability across 100+ LLMs using real API calls, all from a single browser-based interface without needing individual provider keys. Users often explore alternatives for various reasons, such as needing a different pricing model, requiring deeper technical integrations like a dedicated API or SDK, or seeking tools focused on different stages of the AI lifecycle, like ongoing monitoring rather than pre-deployment validation. When evaluating other options, consider your core need: do you require hosted simplicity or self-hosted control? Are you benchmarking a specific, complex task or running general model evaluations? The right tool should align with your workflow, provide transparent cost and performance data, and fit your team's technical requirements.