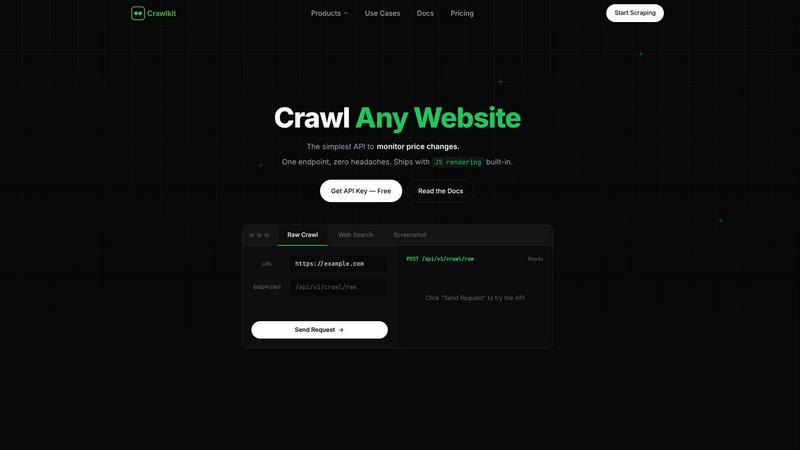

Crawlkit

CrawlKit is an API-first platform that simplifies real-time data extraction from any website with a single call.

Visit

About Crawlkit

CrawlKit is an advanced web data extraction platform designed specifically for developers and data teams who need efficient and scalable access to web data without the complexities of infrastructure management. In the ever-evolving landscape of web scraping, challenges such as rotating proxies, headless browsers, and various anti-bot measures can obstruct data access. CrawlKit addresses these challenges by allowing users to make a single API request, with the platform managing all the underlying complexities seamlessly. This includes proxy rotation, browser rendering, and response retries, enabling developers to concentrate on data utilization instead of the intricacies of data collection. With CrawlKit, users can extract a wide range of web data, including raw page content, search results, visual snapshots, and professional data from platforms like LinkedIn, through a single, consistent interface. This streamlining of the web scraping process significantly reduces the friction typically associated with data extraction, providing a reliable and efficient solution for any data-driven project.

Features of Crawlkit

One API for All Data Sources

CrawlKit offers a unified API that allows users to extract structured data from various websites, social platforms, and app stores with just one request. This eliminates the need for multiple tools or different APIs, simplifying the process of data collection.

Complete and Clean Data Outputs

CrawlKit ensures that users receive complete and clean data outputs by waiting for full page loads and validating responses. This guarantees that developers never have to deal with partial or broken data, enhancing the reliability of the information collected.

Flexible Credit-Based Pricing

CrawlKit employs a transparent, credit-based pricing model that allows users to pay only for what they use. With no hidden fees and flexible options for purchasing additional credits, users can manage their expenses effectively while scaling their data extraction needs.

Robust Integration Capabilities

CrawlKit’s simple HTTP API can be integrated easily with various programming languages and platforms, including Node.js, AWS, and Google. This flexibility means developers can use CrawlKit without being locked into a specific technology stack, promoting a seamless workflow.

Use Cases of Crawlkit

CRM Enrichment

CrawlKit can be employed to automatically enrich Customer Relationship Management (CRM) systems with data from LinkedIn profiles. This includes pulling essential information such as job titles, company details, and contact information for leads, enhancing sales and marketing efforts.

Competitive Intelligence

Businesses can utilize CrawlKit to gather competitive intelligence by tracking key metrics from competitors’ social media accounts or websites. This includes monitoring follower growth, engagement rates, and other performance indicators, allowing companies to stay ahead in their market.

App Review Analysis

CrawlKit is ideal for extracting app reviews from platforms like the Play Store and App Store. By analyzing user feedback and ratings, businesses can gain insights into customer sentiment and improve their product offerings based on real user experiences.

Market Research

Researchers can leverage CrawlKit to conduct comprehensive market research by scraping data from various sources including e-commerce sites, social media, and review platforms. This data can be used to identify trends, consumer preferences, and competitive landscapes, enabling informed decision-making.

Frequently Asked Questions

What types of data can I extract using Crawlkit?

CrawlKit allows users to extract a diverse range of data types, including raw web page content, search results, profile data from LinkedIn and Instagram, and app reviews from app stores, all through a single API call.

Do I need to manage proxies and anti-bot measures?

No, CrawlKit handles all aspects of data extraction including proxy rotation and anti-bot measures. Users can focus on utilizing the extracted data without worrying about the underlying complexities of web scraping.

How does CrawlKit ensure data accuracy?

CrawlKit waits for full page loads and performs validation checks on responses before delivering the data. This ensures that users receive complete and accurate information, minimizing the chances of encountering broken or partial outputs.

Is there a minimum commitment required for using Crawlkit?

CrawlKit operates on a pay-as-you-go model with no monthly commitments. Users can start with a free tier of credits and scale their usage according to their needs without any long-term obligations.

Explore more in this category:

Similar to Crawlkit

Subiq

Subiq simplifies SaaS subscription management for small teams, helping you track spending and eliminate unused tools effortlessly.

Pterocos

Pterocos is a free online HTML editor that lets you code in real-time with instant AI assistance, no installation required.

CodeAva

CodeAva lets developers catch issues early with browser-based website audits, code validation, and practical tools that turn noisy checks into.

GhostlyX Privacy-First Web Analytics

GhostlyX delivers privacy-first web analytics that provide actionable insights while fully respecting your visitors' data privacy.

Microplastic Intake App

Track your daily microplastic intake from food, drinks, and air with research-backed assessments to minimize your exposure.

Webleadr

Webleadr effortlessly connects you to businesses without websites, enabling you to find and contact web design leads in just a few clicks.

TubeAnalytics

TubeAnalytics empowers YouTube creators with AI-driven insights to optimize growth, boost views, and maximize revenue effortlessly.

Metric Nexus

Metric Nexus simplifies your marketing data, allowing you to connect platforms and ask insightful questions easily with AI assistance.